The Next Step in SSD Evolution: NVMe Zoned Namespaces Explained

by Billy Tallis on August 6, 2020 12:00 PM EST- Posted in

- SSDs

- Storage

- Western Digital

- NVMe

- SMR

- Radian Memory

In June we saw an update to the NVMe standard. The update defines a software interface to assist in actually reading and writing to the drives in a way to which SSDs and NAND flash actually works.

Instead of emulating the traditional block device model that SSDs inherited from hard drives and earlier storage technologies, the new NVMe Zoned Namespaces optional feature allows SSDs to implement a different storage abstraction over flash memory. This is quite similar to the extensions SAS and SATA have added to accommodate Shingled Magnetic Recording (SMR) hard drives, with a few extras for SSDs. ‘Zoned’ SSDs with this new feature can offer better performance than regular SSDs, with less overprovisioning and less DRAM. The downside is that applications and operating systems have to be updated to support zoned storage, but that work is well underway.

The NVMe Zoned Namespaces (ZNS) specification has been ratified and published as a Technical Proposal. It builds on top of the current NVMe 1.4a specification, in preparation for NVMe 2.0. The upcoming NVMe 2.0 specification will incorporate all the approved Technical Proposals, but also reorganize that same functionality into multiple smaller component documents: a base specification, plus one for each command set (block, zoned, key-value, and potentially more in the future), and separate specifications for each transport protocol (PCIe, RDMA, TCP). The standardization of Zoned Namespaces clears the way for broader commercialization and adoption of this technology, which so far has been held back by vendor-specific zoned storage interfaces and very limited hardware choices.

Zoned Storage: An Overview

The fundamental challenge of using flash memory for a solid state drive is all of our computers are built around the concept of how hard drives work, and flash memory doesn't behave like a hard drive. Flash is organized very differently from a hard drive, and so optimizing our computers for the enhanced performance characteristics of flash memory will make it worth the trouble.

Magnetic platters are a fairly analog storage medium, with no inherent structure to dictate features like sector sizes. The long-lived standard of 512-byte sectors was chosen merely for convenience, and enterprise drives now support 4K byte sectors as we reach drive capacities in the multi-TB range. By contrast, a flash memory chip has several levels of structure baked into the design. The most important numbers are the page size and erase block size. Data can be read with page size granularity (typically on the order of several kB) and an empty page can be written to with a program operation, but erase operations clear an entire multi-MB block. The substantial size mismatch between read/program operations and erase operations is a complication that ordinary mechanical hard drives don't have to deal with. The limited program/erase cycle endurance of flash memory also adds to challenge, as writing fewer times increases the lifespan.

Almost all SSDs today are presented to software as an abstraction of a simple HDD-like block storage device with 512-byte or 4kB sectors. This hides all the complexities of SSDs that we’ve gone into detail over the years, such as page and erase block sizes, wear leveling and garbage collection. This abstraction is also part of why SSD controllers and firmware are so much bigger and more complicated (and more bug-prone) than hard drive controllers. For most purposes, the block device abstraction is still the right compromise, because it allows unmodified software to enjoy most of the performance benefits of flash memory, and the downsides like write amplification are manageable.

For years, the storage industry has been exploring alternatives to the block storage abstraction. There have been several proposals for Open Channel SSDs, which expose many of the gory details of flash memory directly to the host system, moving many of the responsibilities of SSD firmware over to software running on the host CPU. The various open channel SSD standards that have been promoted have struck different balances along the spectrum, between a typical SSD with a fully drive-managed flash translation layer (FTL) to a fully software-managed solution. The industry consensus was that some of the earliest standards, like the LightNVM 1.x specification, exposed too many details, requiring software to handle some differences between different vendors' flash memory, or between SLC, MLC, TLC, etc. Newer standards have sought to find a better balance and a level of abstraction that will allow for easier mass adoption while still allowing software to bypass the inefficiencies of a typical SSD.

Tackling the problem from the other direction, the NVMe standard has been gaining features that allow drives to share more information with the host about optimal patterns for data access and layout. For the most part, these are hints and optional features that software can take advantage of. This works because software that isn't aware of these features will still function as usual. Directives and Streams, NVM Sets, Predictable Latency Mode, and various alignment and granularity hints have all been added over the past few revisions of the NVMe specification to make it possible for software and SSDs to better cooperate.

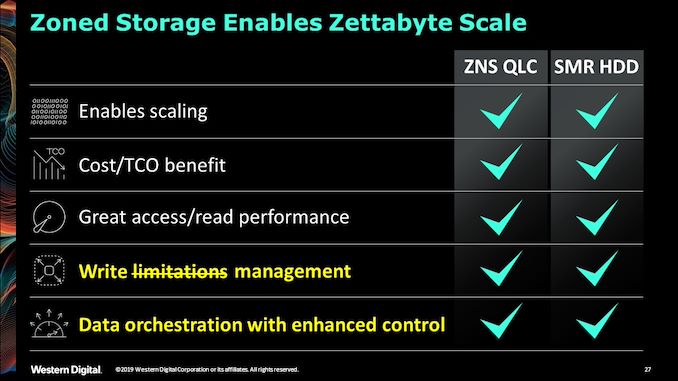

Lately, a third approach has been gaining momentum, influenced by the hard drive market. Shingled Magnetic Recording (SMR) is a technique for increasing storage density by partially overlapping tracks on hard drive platters. The downside of this approach is that it's no longer possible to directly modify arbitrary bytes of data without corrupting adjacent overlapping tracks, so SMR hard drives group tracks into zones and only allow sequential writes within a zone. This has severe performance implications for workloads that include random writes, which is part of why drive-managed SMR hard drives have seen a mixed reception at best in the marketplace. However, in the server storage market, host-managed SMR is also a viable option: it requires the OS, filesystem and potentially the application software to be directly aware of zones, but making the necessary software changes is not an insurmountable challenge when working with a controlled environment.

The zoned storage model used for SMR hard drives turns out to also be a good fit for use with flash, and is a precursor to NVMe Zoned Namespaces. The zone-like structure of SMR hard drives mirrors the page and erase block structure of an SSD. The restrictions on writes aren't an exact match, but it comes close enough.

In this article, we’ll cover what NVMe Zoned Namespaces are, and why this is an important thing.

45 Comments

View All Comments

jeremyshaw - Monday, August 10, 2020 - link

The early 70s and 80s timeframe saw CPUs and Memory scaling roughly the same, year to year. After a while, memory advanced a whole lot slower, necessitating the multiple tiers of memory we have now, from L1 cache to HDD. Modern CPUs didn't become lots of SRAM with at attached ALU just because CPU designers love throwing their transistor budget into measly megabytes of cache. They became that way, simply because other tiers of memory and storage are just too slow.WorBlux - Wednesday, December 22, 2021 - link

Modern CPU's have instruction that let you skip cache, and then there was SPARC with streaming accelerators, where you could unleash a true vector/CUDA style instruction directly against a massive chunk of memory.Arbie - Thursday, August 6, 2020 - link

An excellent article; readable and interesting even to those (like me) who don't know the tech but with depth for those who do. Right on the AT target.Arbie - Thursday, August 6, 2020 - link

And - I appreciated the "this is important" emphasis so I knew where to pay attention.ads295 - Friday, August 7, 2020 - link

+1 all the waybatyesz - Thursday, August 6, 2020 - link

UltraRAM is the next big step in the computer market.tygrus - Thursday, August 6, 2020 - link

The first 512-sectors I remember is going back to the days of IBM XT compatibles, 5¼ inch floppies, 20MB HDD, MSDOS, FAT12 & FAT16. That well over 30 years of baggage is heavy to carry around. They moved to 32bit based file systems and 4KB blocks/clusters or larger (eg. 64 or 128bit addresses, 2MB blocks/clusters are possible).It wastes space to save small files/fragments in large blocks but it also wastes resources to handle more locations (smaller blocks) with longer addresses taking up more space and processing.

Management becomes more complex to overcome the quirks of HW & increased capacities.

WaltC - Tuesday, August 11, 2020 - link

Years ago, just for fun, I formatted a HD with 1k clusters because I wanted to see how much of a slowdown the increased overhead would create--I remember it being quite pronounced and quickly jumped back to 4k clusters. I was surprised at how much of slow down it created. That was many years ago--I can't even recall what version of Windows I was using at the time...;)Crazyeyeskillah - Thursday, August 6, 2020 - link

I'll ask the dumb questions no one else has posted:What kind of performance numbers will this equate to?

Cheers

Billy Tallis - Thursday, August 6, 2020 - link

There's really too many variables and too little data to give a good answer at this point. Some applications will be really ill-suited to running on zoned storage, and may not gain any performance. Even for applications that are a good fit for zoned storage, the most important benefits may be to latency/QoS metrics that are less straightforward to interpret than throughput.The Radian/IBM Research case study mentioned near the end of the article claims 65% improvement to throughput and 22x improvement to some tail latency metric for a Sysbench MySQL test. That's probably close to best-case numbers.