Intel Core i7-10700 vs Core i7-10700K Review: Is 65W Comet Lake an Option?

by Dr. Ian Cutress on January 21, 2021 10:30 AM EST- Posted in

- CPUs

- Intel

- Core i7

- Z490

- 10th Gen Core

- Comet Lake

- i7-10700K

- i7-10700

CPU Tests: Encoding

One of the interesting elements on modern processors is encoding performance. This covers two main areas: encryption/decryption for secure data transfer, and video transcoding from one video format to another.

In the encrypt/decrypt scenario, how data is transferred and by what mechanism is pertinent to on-the-fly encryption of sensitive data - a process by which more modern devices are leaning to for software security.

Video transcoding as a tool to adjust the quality, file size and resolution of a video file has boomed in recent years, such as providing the optimum video for devices before consumption, or for game streamers who are wanting to upload the output from their video camera in real-time. As we move into live 3D video, this task will only get more strenuous, and it turns out that the performance of certain algorithms is a function of the input/output of the content.

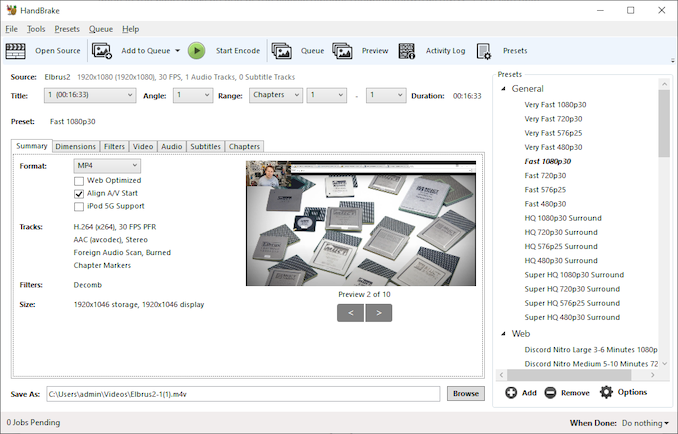

HandBrake 1.32: Link

Video transcoding (both encode and decode) is a hot topic in performance metrics as more and more content is being created. First consideration is the standard in which the video is encoded, which can be lossless or lossy, trade performance for file-size, trade quality for file-size, or all of the above can increase encoding rates to help accelerate decoding rates. Alongside Google's favorite codecs, VP9 and AV1, there are others that are prominent: H264, the older codec, is practically everywhere and is designed to be optimized for 1080p video, and HEVC (or H.265) that is aimed to provide the same quality as H264 but at a lower file-size (or better quality for the same size). HEVC is important as 4K is streamed over the air, meaning less bits need to be transferred for the same quality content. There are other codecs coming to market designed for specific use cases all the time.

Handbrake is a favored tool for transcoding, with the later versions using copious amounts of newer APIs to take advantage of co-processors, like GPUs. It is available on Windows via an interface or can be accessed through the command-line, with the latter making our testing easier, with a redirection operator for the console output.

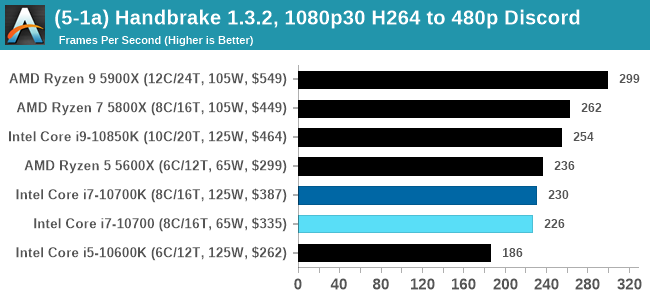

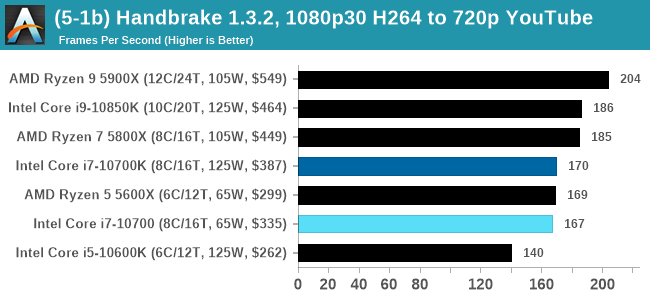

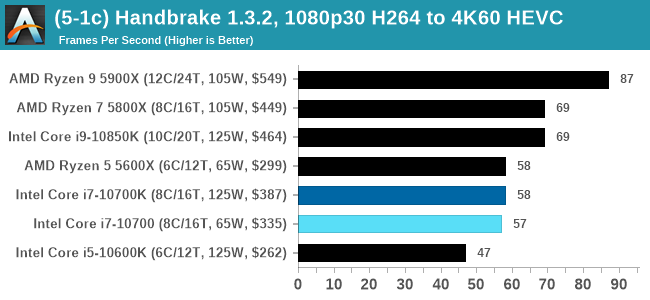

We take the compiled version of this 16-minute YouTube video about Russian CPUs at 1080p30 h264 and convert into three different files: (1) 480p30 ‘Discord’, (2) 720p30 ‘YouTube’, and (3) 4K60 HEVC.

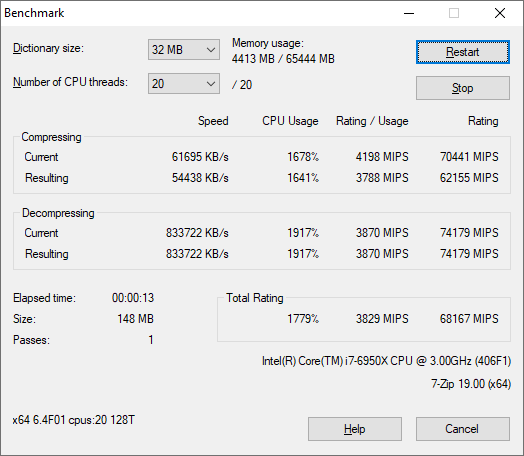

7-Zip 1900: Link

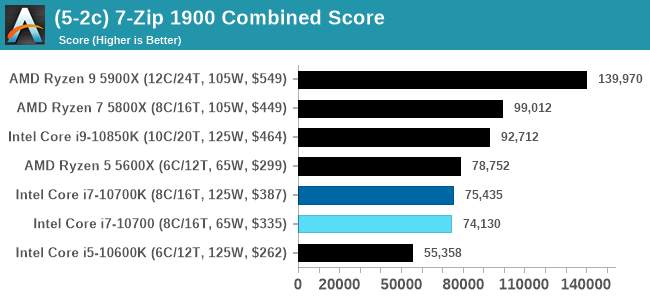

The first compression benchmark tool we use is the open-source 7-zip, which typically offers good scaling across multiple cores. 7-zip is the compression tool most cited by readers as one they would rather see benchmarks on, and the program includes a built-in benchmark tool for both compression and decompression.

The tool can either be run from inside the software or through the command line. We take the latter route as it is easier to automate, obtain results, and put through our process. The command line flags available offer an option for repeated runs, and the output provides the average automatically through the console. We direct this output into a text file and regex the required values for compression, decompression, and a combined score.

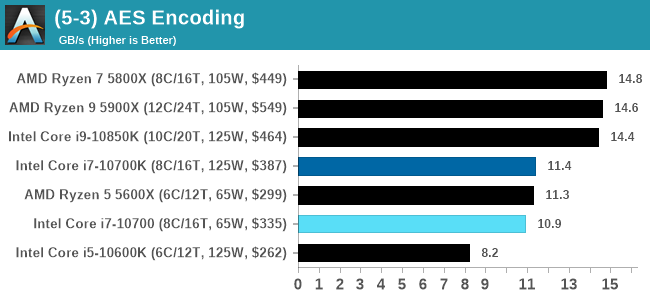

AES Encoding

Algorithms using AES coding have spread far and wide as a ubiquitous tool for encryption. Again, this is another CPU limited test, and modern CPUs have special AES pathways to accelerate their performance. We often see scaling in both frequency and cores with this benchmark. We use the latest version of TrueCrypt and run its benchmark mode over 1GB of in-DRAM data. Results shown are the GB/s average of encryption and decryption.

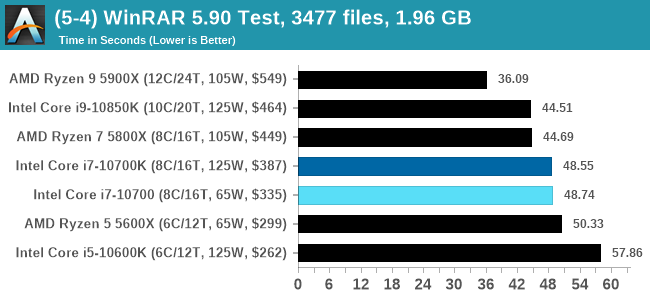

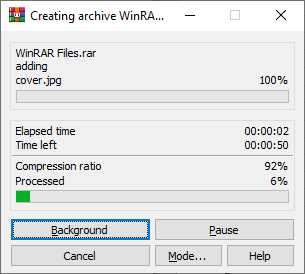

WinRAR 5.90: Link

For the 2020 test suite, we move to the latest version of WinRAR in our compression test. WinRAR in some quarters is more user friendly that 7-Zip, hence its inclusion. Rather than use a benchmark mode as we did with 7-Zip, here we take a set of files representative of a generic stack

- 33 video files , each 30 seconds, in 1.37 GB,

- 2834 smaller website files in 370 folders in 150 MB,

- 100 Beat Saber music tracks and input files, for 451 MB

This is a mixture of compressible and incompressible formats. The results shown are the time taken to encode the file. Due to DRAM caching, we run the test for 20 minutes times and take the average of the last five runs when the benchmark is in a steady state.

For automation, we use AHK’s internal timing tools from initiating the workload until the window closes signifying the end. This means the results are contained within AHK, with an average of the last 5 results being easy enough to calculate.

210 Comments

View All Comments

dullard - Thursday, January 21, 2021 - link

Yes, it is sad that even well respected PhDs in the field can't seem to understand that TDP is not total consumed power. Never has been, never will be. TDP is simply the minimum power to design your cooling system around.I actually think that Intel went in the right direction with Tiger Lake. It will do everyone a service to drop any mention of TDP solely into the fine print of tech documents because so many people misunderstand it.

Yes, TSMC has a fantastic node right now with lower power that AMD is making good use of. Yes, that makes Intel look bad. Lets clearly state that fact and move on.

Power usage matters for mobile (battery life), servers (cooling requirements and energy costs), and the mining fad (profits). Power usage does not matter to most desktop users.

dullard - Thursday, January 21, 2021 - link

Also don't forget that we are talking about 12 seconds or 28 seconds of more power, then it drops back down unless the motherboard manufacturer overrides it. The costs to desktop users for those few seconds is fractions of a penny.bji - Thursday, January 21, 2021 - link

"minimum power to design your cooling system around" makes NO SENSE.You don't design any cooling system to handle the "minimum", you design it to handle the "maximum".

It sounds like you've bought into Intel's convoluted logic for justifying their meaningless TDP ratings?

iphonebestgamephone - Thursday, January 21, 2021 - link

Why are there low end and high end coolers then? Arent the cheap ones for the minimum, in this case 65w?Spunjji - Friday, January 22, 2021 - link

dullard's comments are, indeed, a post-hoc justification in search of an audience.dullard - Friday, January 22, 2021 - link

Bji, no, that is not how how engineering works. You need to know the failure limit on the minimum side. If your cooling system cannot consistently cool at least 65W, then your product will fail to meet specifications. That is a very important number for a system designer. Make a 60W cooling system around the 10700 chip and you'll have a disaster.You can always cool more than 65W and have more and/or faster turbos. There is no upper limit to how much cooling capability you can use. A 65W cooler will work, a 125W cooler will work, a 5000 W cooler will work. All you get with better cooling is more turbo, more often. That is a selling point, but that is it - a selling point. It is the the 65W number that is the critical design requirement to avoid failures.

edzieba - Friday, January 22, 2021 - link

Minor correction on " Never has been, never will be": TDP and peak package power draw WERE synonymous once, for consumer CPUs, back when a CPU just ran at a single fixed frequency all the time. It's not been true for a very long time, but now persists as a 'widely believed fact'.Something being true only in very specific scenarios but being applied generally out of ignorance is pretty common in the 'enthusiast' world: RAM heatsinks (if you're not running DDR2 FBDIMMs they're purely decorative), m.2 heatsinks (cooling the NAND dies is actively harmful, cooling the controller was only necessary for a single model of OEM-only brown-box Samsung drives because nobody had the tool to tell the controller to not run at max power all the time), hugely oversized WC radiators (from the days when rad area was calculated assuming repurposed low-density-high-flow car AC radiators, not current high-density-low-flow radiators), etc.

Even now "more cores = more better" in the consumer market, despite very few consumer-facing workloads spanning more than a handful of threads (and rarely maxing out more than a single core).

dullard - Friday, January 22, 2021 - link

I'll give you credit there. I should have said "not since turbo" instead of "never has been". Good catch. I wish there was an edit button.Spunjji - Friday, January 22, 2021 - link

What's really sad is that you apparently prefer to write a long comment trying to dunk on the author, rather than read the article he wrote for you to enjoy *for free*."I actually think that Intel went in the right direction with Tiger Lake"

You think poorly.

"Yes, TSMC has a fantastic node right now with lower power that AMD is making good use of. Yes, that makes Intel look bad. Lets clearly state that fact and move on."

Aaaand there's the motivation for the sour grapes.

dullard - Friday, January 22, 2021 - link

Spunjji, I must assume since you didn't have anything to actually refute what I said, that you have nothing to refute it and instead choose to bash the messenger. Thanks for backing me up!